|

So Machine One requires twoīounces to generate a symbol, while guessing an unknown The expected number of questions is equal to the expected Notice the connection between yes or no questions and fair bounces. Symbol A times one bounce, plus the probability ofī times three bounces, plus the probability ofĬ times three bounces, plus the probability The expected number of bounces is the probability of Now we just take a weightedĪverage as follows. In this case, the firstīounce leads to either an A, which occurs 50% of the time, or else we lead to a second bounce, which then can either output a D, which occurs 25% of the time, or else it leads to a third bounce, which then leads to eitherī or C, 12.5% of the time. Based on where the disc lands, we output A, B, C, or D. That we have two bounces, which lead to fourĮqually likely outcomes. To add a second level, or a second bounce, so Based on which way it falls, we can generate a symbol. Let's assume instead we want to build Machine One and Machine Two, and we can generate symbols by bouncing a disc off a peg in one of two equally likely directions. On average, how many questions do you expect to ask, to determine a symbol from Machine Two? This can be explained Otherwise, we have to ask a third question to identify which of the Otherwise, we are left with two equal outcomes, D or, B and C We could ask, "is it D?". We could start by asking "is it A?", if it is A we are done, only one question in this case. Here A has a 50% chance of occurring, and all other letters add to 50%. Probability of each symbol is different, so we can ask What about Machine Two? As with Machine One, we could ask two questions to determine the next symbol. We can say the uncertainty of Machine One is two questions per symbol. So we simply pick one, such as "is it A?", and after this second question, we will have correctly Of the possibilities, and we will be left with two

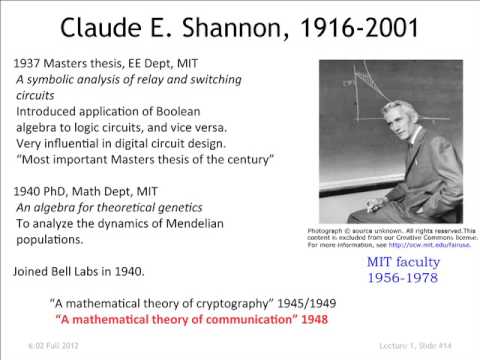

After getting the answer, we can eliminate half For example, our first question, we could ask if it is any two symbols, such as "is it A or B?", since there is a 50% chance of A or B and a 50% chance of C or D. Is to pose a question which divides the possibilities in half. You would expect to ask? Let's look at Machine One. Is the minimum number of yes or no questions If you had to predict the next symbol from each machine, what More information? Claude Shannon cleverly Machine One generatesĮach symbol randomly, they all occur 25% of the time, while Machine Two generates symbols according to the following probabilities. They both output messages from an alphabet of A, B, C, or D. Why should I know What is entropy? Where it will help me? or where it helps the world to know about entropy Is this entropy being used in some real world application, if yes then if you could have quoted a real world example in the video it would have been more clear.

Is the second sentence produced by machine 2 is more uncertain than machine 1.

In the above i would say machine 2 is more informative as it gives more detailed information about the subject I wanted to know.

Machine 2: computer is an electronic device which can perform huge arithmetic operations in fraction of seconds. Machine 1: computer is an electronic device. For example I choose that I need information about "Computers" and then two machines gave me information as below: for that first thing I have to choose is for what I need information. To me information is "what is conveyed or represented by a particular arrangement or sequence of things" are we talking about this information here.Īlso I would like to tell what more information to me means. What exactly do we mean by information here. How uncertainty is related to information. \sum_i p_i \text)$, then we get two bits per letter (for example, we encode A as 00, B as 01, C as 10 and D as 11).Can't get how machine 1 gives more information than machine 2 just because data coming out of it is more uncertain. However, if for probability distribution $P$ you use encoding which is optimal for a different probability distribution $Q$, then the average length of the encoded message is That is, Shannon entropy of the original probability distribution. So in optimal encoding the average length of encoded message is To encode an event occurring with probability $p$ you need at least $\log_2(1/p)$ bits (why? see my answer on "What is the role of the logarithm in Shannon's entropy?").

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed